Textures

Images and textures are different. Images are arrays of color data and have height and width in terms of pixel count. Textures are often composed of images, but they are not the same. The data in textures is often not color data and can be any values that are useful to a graphics program (depth, normals, bit masks, etc.). Also, textures are often composed of multiple images.

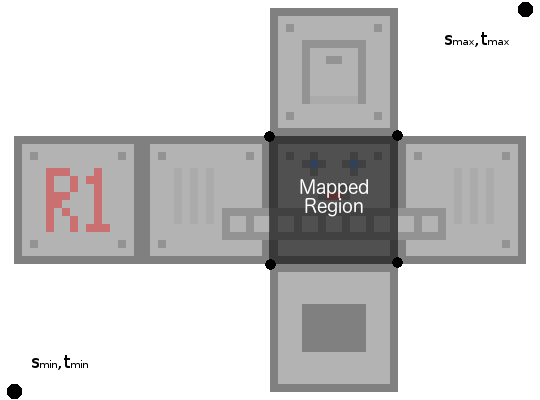

Unlike images, all textures have the same size. We will only consider 2D textures, but this applies for textures of other dimensions. Texture sizes are mapped to the range [0, 1]. Since the images that compose textures have different dimensions, the pixel samples are mapped to the texture range [0, 1]. Thus, all textures have the same size, but different sample counts within the texture region.

Texel lookup

Similar to how the color samples in images are called pixels, the samples in textures are called texels. The texel coordinates in texture space are commonly defined as s, t, r, q. In GLSL, vector components can be accessed with these names : s, t, p, q (since 'r' is already used for rgba lookup).

Example of a 2D texture space:

Note that the OpenGL texture origin is in the lower left corner. Most image formats define the upper left as the origin, so they appear vertically flipped in OpenGL.

Texture mapping

Now we can address in texture space. Next, we want to 'wrap' our 3D models in these textures. While this works in higher and lower dimensions, we will consider wrapping the surface of a 3D model with a 2D texture. To do this, we map the surface of our 3D object to the 2D texture space. For triangular models, this is often done by 'pinning' the texture onto the model. For a 2D texture, the 'pins' are 2D coordinates that map to the texture space. These are called texture coordinates or UV coordinates (since they are often defined with the variables u and v).

In the example below, the UV coordinates map into the ST texture space, defining what part of the texture maps to the model surface.

Inverse mapping

Texture coordinates easily map a surface location to a location in the texture map. However, we wish to apply textures to 3D models that are transformed and displayed in a 2D image. If the model and the texture coordinates are parametrically defined (as mathematical functions), the inverse mapping can be a complicated function that translates any point on the surface to UV coordinates, which then can refer to a texture space. This is usually handled by the modeling software.

Most of our models will be built out of triangles. When the modeling software exports a triangulated model, it also exports the UV mapping coordinates. To do the inverse mapping, we simply pass these coordinates all the way to image space (fragment shader). Then, for any surface point, we have the UV coordinate and can map back to the texture.